Introduction

Boards and executive teams are approving AI faster than they're assessing the risks it introduces. According to IBM's 2025 research, 72% of companies now integrate AI into at least one business function—yet only 23.8% have governance frameworks that address technology, third-party, and model risks "to a large extent." Nearly 74% report moderate or limited coverage, leaving leadership exposed to risks they cannot articulate.

AI risk isn't a technical problem relegated to the IT department. It surfaces in governance, liability, regulatory exposure, and reputational harm—all of which land on the board's agenda. 72% of S&P 500 companies now flag AI as a material risk in their public disclosures—up from just 12% in 2023. Boards that cannot explain how AI risk is being managed, who owns it, and what the response looks like when it materializes are already behind.

TL;DR

- An AI risk assessment identifies, categorizes, and prioritizes risks AI systems introduce across your organization

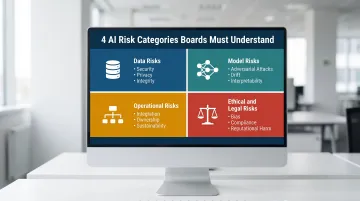

- Risks fall into four categories: data risks, model risks, operational risks, and ethical/legal risks

- Effective assessments follow a repeatable six-step process—from scope definition and use mapping through ownership assignment and ongoing monitoring

- NIST AI RMF, EU AI Act, and ISO/IEC 42001 provide useful baselines, though none work without clear decision rights and executive ownership behind them

- AI risk assessment is continuous, not one-time, and requires board-level visibility to remain defensible

What Is an AI Risk Assessment?

An AI risk assessment is a systematic process for identifying, evaluating, and documenting the risks associated with AI systems (including both internal AI tools and third-party AI vendors) across the full AI lifecycle. It surfaces what could go wrong, where exposure exists, and which risks require immediate action versus monitoring.

Understanding the distinction between assessment, management, and governance matters for boards:

- Assessment is investigative: What could go wrong? Where are the blind spots?

- Management is action-based: What do we do about identified risks? Who owns mitigation?

- Governance is the overarching framework that sets the rules for both—defining decision rights, escalation thresholds, and accountability structures

Boards need clarity on all three, particularly where their oversight responsibility sits versus what management owns and executes.

AI risk assessments can be qualitative or quantitative, reactive or proactive. Triggers include:

- New AI deployments or vendor onboarding

- Regulatory changes affecting AI use

- M&A activity introducing inherited AI systems

- Incidents or near-misses that expose gaps

Waiting for the annual cycle to surface these risks means decisions get made without the visibility boards are accountable for.

The 4 Types of AI Risk Every Board Should Know

Before an organization can assess AI risk, leadership needs a shared vocabulary. These four categories apply whether AI is built in-house or procured from a vendor. Without this vocabulary, boards cannot ask the right questions — and cannot hold management accountable for the answers.

Data Risks

AI systems depend on data—and compromised, biased, or poorly governed data corrupts outputs and creates liability. Data risks fall into three sub-categories:

- Data security: Breach and unauthorized access exposing sensitive information

- Data privacy: PII handling failures that trigger regulatory exposure under GDPR, CCPA, or sector-specific rules

- Data integrity: Biased or low-quality training data that produces flawed decisions, erodes trust, and introduces legal risk

When AI processes personal data unlawfully, the European Data Protection Board has made clear that deployment of that model is also unlawful under the "tainted model" principle—meaning governance failures at the training stage can invalidate the entire system.

Model Risks

The AI system itself is a source of risk — in how it performs, how it degrades, and how it can be manipulated:

- Adversarial attacks and prompt injection: Malicious inputs that manipulate outputs, bypassing controls or extracting sensitive information

- Model drift: Performance degradation over time as real-world data diverges from training data, producing unreliable results

- Lack of interpretability: "Black-box" models that make decisions no one can explain—a serious problem in regulated industries where accountability is non-negotiable

The CFPB made this explicit in 2023: lenders using AI for credit decisions must provide specific reasons for denials, even when using complex models. There is no "special exemption" for model complexity.

Operational Risks

Governance structure — or the absence of one — determines whether AI deployments remain manageable or quietly accumulate operational exposure:

- Integration failures: AI systems that don't work with existing infrastructure, creating workarounds or shadow AI deployments

- Lack of clear ownership: No one accountable for AI decisions, incident response, or ongoing monitoring

- Sustainability challenges: AI systems that scale without governance structures to support them

McKinsey's 2025 research found that only 39% of Fortune 100 companies disclosed any form of board oversight of AI as of 2024, and fewer than 25% have board-approved, structured AI policies. When governance doesn't exist at the board level, operational risks compound across the organization without visibility or escalation paths.

Ethical and Legal Risks

Ethical and legal risks create fiduciary exposure boards cannot ignore:

- Algorithmic bias: Non-representative training data producing discriminatory outcomes in hiring, lending, or customer-facing decisions

- Regulatory non-compliance: Violations of GDPR, the EU AI Act, sector-specific rules, or evolving state-level AI regulations

- Reputational harm: When AI decisions can't be explained or defended publicly, trust erodes and brand value declines

The litigation risk here is concrete. The class action Kistler v. Eightfold AI alleges an AI hiring tool scored applicants on a zero-to-five scale and auto-rejected low-ranked candidates before any human review — a potential Fair Credit Reporting Act violation carrying statutory damages of $100 to $1,000 per willful violation.

These four risk types don't stay in the IT department. Each one creates fiduciary exposure — and regulators, plaintiffs, and institutional investors are increasingly asking whether boards can demonstrate they understood the risks before something went wrong.

How to Conduct an AI Risk Assessment: Step by Step

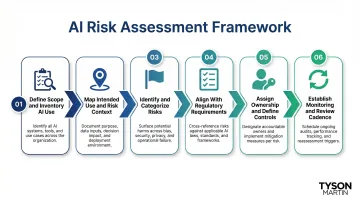

Six steps take an AI risk assessment from blank page to board-ready output. Each one builds on the last — and each produces something inspectable, not just documented.

Step 1 — Define Scope and Inventory AI Use

Identify every AI system in use: internal tools, vendor-supplied AI, and embedded AI within third-party platforms (shadow AI included). Without a complete inventory, the assessment has blind spots.

Key actions:

- Document all AI tools, platforms, and vendors

- Include generative AI tools employees may be using without formal approval

- Catalog AI embedded in SaaS platforms and third-party services

- Assign preliminary owners to each system

Metrics impacted: Coverage completeness, governance accountability

Step 2 — Map Intended Use and Risk Context

For each AI system, document its intended purpose and who it affects — employees, customers, or regulated individuals. Note what data it processes and what decisions it influences or automates. That context determines which risk categories apply and at what severity.

Key questions:

- What business function does this AI system serve?

- Does it process personal data or make decisions about people?

- Is it used in a regulated context (hiring, lending, healthcare)?

- What happens if the system produces incorrect or biased outputs?

Metrics impacted: Risk scoping accuracy, stakeholder clarity

Step 3 — Identify and Categorize Risks

Walk the full AI lifecycle—training, deployment, ongoing use—and surface risks across all four categories: data, model, operational, and ethical/legal. Use a consistent rating approach (likelihood × impact) to begin prioritizing.

Approach:

- Assess data risks: security, privacy, integrity

- Assess model risks: adversarial attacks, drift, interpretability

- Assess operational risks: integration, ownership, sustainability

- Assess ethical/legal risks: bias, compliance, reputational harm

Metrics impacted: Risk completeness, prioritization quality

Step 4 — Align With Regulatory and Framework Requirements

Cross-reference identified risks against applicable regulations (EU AI Act, GDPR, CCPA, sector-specific standards) and established frameworks (NIST AI RMF, ISO/IEC 42001). Note where gaps exist between current controls and what compliance or best practice requires.

Key outputs:

- Gap analysis showing where controls fall short

- Mapping of AI systems to regulatory requirements

- Documentation of compliance posture by system

Metrics impacted: Compliance posture, defensibility of decisions

Step 5 — Assign Ownership and Define Controls

Translate assessment findings into accountable actions: who owns each risk, what controls or mitigations apply, and what the escalation threshold is if a risk materializes. This is where decision rights get codified—a critical gap in most organizations.

Required elements:

- Named risk owner for each identified risk

- Specific mitigation or control measure

- Escalation threshold and process

- Timeline for implementation

Metrics impacted: Accountability clarity, incident response readiness

Step 6 — Establish Monitoring and Review Cadence

AI systems evolve, threat landscapes shift, and regulations change. Define how often the assessment will be refreshed, what triggers an out-of-cycle review (new AI deployment, incident, M&A, regulatory update), and how risk posture will be reported to the board in plain language.

The business case for this cadence is clear: according to Gartner research, organizations that perform regular AI system audits and compliance assessments are over three times more likely to achieve high GenAI value than those that do not.

Metrics impacted: Risk currency, board-level visibility

AI Risk Assessment Frameworks Worth Knowing

Frameworks provide a structured, recognized baseline that makes your AI risk posture defensible to regulators, auditors, and the board, while reducing the effort of designing a methodology from scratch. However, frameworks are guides, not substitutes for governance. An organization can reference NIST AI RMF and still have no one accountable for acting on what it surfaces.

NIST AI RMF

A voluntary, widely adopted U.S. framework organized around four functions—Govern, Map, Measure, Manage. Released in January 2023, it's applicable across industries and geographies, making it useful as an internal governance baseline.

Four core functions:

- Govern: Cultivate a culture of risk management with policies, accountability structures, transparency, and third-party risk policies

- Map: Establish context to frame risks—understanding capabilities, categorization, and system characterization

- Measure: Employ tools and methodologies to analyze, assess, benchmark, and monitor AI risk and trustworthiness

- Manage: Allocate risk management resources to mapped and measured risks on a regular basis

Best for: Organizations seeking a flexible, principle-based approach to AI risk management that can scale across business units and geographies.

EU AI Act

A risk-tiered regulatory framework classifying AI systems by potential harm. Mandatory for organizations operating in the EU; referenced globally as a compliance baseline even outside Europe.

Risk tiers:

- Unacceptable Risk: Prohibited AI systems (social scoring, certain biometric identification)

- High Risk: Strict compliance obligations for AI affecting safety or fundamental rights (critical infrastructure, healthcare, employment, law enforcement)

- Limited Risk: Transparency requirements (chatbots, deepfakes—users must know they're interacting with AI)

- Minimal Risk: No new obligations (AI-enabled games, spam filters)

Key compliance dates began February 2025 and continue through August 2027. Official implementation timeline shows phased enforcement.

Best for: Organizations with EU operations or global footprints where harmonized AI governance is strategically valuable.

ISO/IEC 42001 / 42005

International standards for AI management systems and AI impact assessments, both oriented toward organizations that need governance structures auditors and regulators can inspect.

What each standard covers:

- ISO/IEC 42001 (December 2023): Requirements for establishing, implementing, and maintaining an AI management system

- ISO/IEC 42005 (2025): Guidance for conducting AI system impact assessments at the project or deployment level

Best for: Organizations operating across multiple jurisdictions that need a certifiable, audit-ready AI governance structure—particularly where EU, U.S., and international regulators all have visibility.

Common Mistakes That Undermine AI Risk Assessments

Treating It as a One-Time Compliance Exercise

AI risk is dynamic. Organizations that conduct a single assessment and file it away create a false sense of security. Risk posture changes every time a new AI tool is deployed, a vendor updates their model, or a regulation is enacted. McKinsey's 2026 research shows that nearly 60% of respondents cite knowledge and training gaps as the primary barrier to responsible AI—up from approximately 50% the prior year. Continuous assessment closes that gap.

Keeping It Inside IT Without Board Visibility

When AI risk assessment lives entirely within the technology function, the board loses its ability to provide meaningful oversight. Findings need to reach leadership in plain language—not buried in technical jargon—with clear escalation paths and owners attached.

The gap is significant: only 15% of boards currently receive AI-related metrics, according to McKinsey's 2025 survey of directors. That leaves most boards approving AI strategy without the data to challenge it.

Ignoring Third-Party and Vendor AI Risk

Many organizations assess their own AI systems but overlook the AI embedded in vendor platforms and third-party tools. The numbers reflect how common this blind spot is:

- IBM's 2025 research found 74% of organizations have moderate or limited coverage of third-party AI risk in their governance frameworks

- Among S&P 500 companies, 18 specifically disclosed vendor AI exposure as a cybersecurity risk in their filings

When vendor AI sits outside the assessment scope, risk doesn't disappear — it just moves to a place the organization can't see or control.

How Tyson Martin Helps Organizations Get This Right

Tyson Martin's board advisory and fractional CISO/CIO work is built for organizations that need a defensible AI risk posture fast. That includes organizations entering this process for the first time, responding to a regulatory inquiry, or navigating a leadership transition where AI governance has stalled.

The value delivered is specific and measurable:

- Clarifying decision rights around AI risk: Who owns what, who escalates when, and what thresholds trigger board-level visibility

- Building a governance structure the board can actually inspect: Not theoretical frameworks, but tangible processes with owners and metrics

- Translating technical risk into plain-language reporting: Board members receive risk posture updates that show what changed since the last briefing, not technical jargon

- Standing up a 90-day plan with owners and measurable outcomes: Structured to move quickly without disrupting ongoing operations

Tyson's active roles at the World Economic Forum Centre for Cybersecurity, National Retail Federation CISO Executive Committee, and NACD keep this work grounded in current practitioner standards — the kind tested in real incidents, not just documented in frameworks. Organizations working with Tyson get governance structures that hold under pressure and reporting that boards can defend when regulators and auditors come asking.

Frequently Asked Questions

What are the 4 types of AI risk?

The four categories are data risks (security, privacy, integrity), model risks (adversarial attacks, drift, interpretability), operational risks (integration, ownership, sustainability), and ethical/legal risks (bias, compliance, reputational harm). Each affects governance, compliance, and business continuity if left unmanaged.

How is AI used in risk assessment?

AI tools can assist the risk assessment process itself by automating anomaly detection, scanning for compliance gaps, and flagging model drift. However, AI-assisted assessment still requires human judgment and governance oversight—tools surface risks, but people must own decisions.

Can AI write a risk assessment?

AI can generate draft frameworks, surface relevant risks from data, and structure documentation, but a defensible AI risk assessment requires human accountability, contextual judgment, and sign-off from authorized decision-makers. Boards cannot delegate oversight to algorithms.

What is the best AI for risk management?

No single tool fits every organization. Effective AI risk management depends on selecting tools aligned to your specific risk categories, pairing them with a recognized framework like NIST AI RMF, and confirming governance structures are in place to act on what those tools surface.

Can AI be used for risk assessment?

Yes. AI can enhance risk assessment through continuous monitoring and automated compliance checks. The critical discipline, however, is assessing the AI tools themselves — the governance feedback loop must evaluate the assessors, not just the assessed.