The practical reality is this: if your organization deploys AI systems that affect hiring decisions, customer credit, healthcare outcomes, or critical infrastructure—and you don't have documented governance, risk classifications, and human oversight in place—you're exposed. Not theoretically. Not eventually. Now.

This guide covers the global regulatory landscape as it stands in 2026, the chaotic US federal-versus-state picture, the practical risks boards and executives face when AI governance falls short, and a framework you can actually implement.

TLDR

- The EU AI Act classifies AI by risk level — high-risk applications face fines up to €35 million or 7% of global revenue

- The US has no unified federal AI law — compliance means navigating conflicting executive orders, agency rules, and a growing patchwork of state laws

- Boards are accountable for AI risk regardless of whether the organization built the system or simply uses it

- Organizations operating internationally face overlapping compliance requirements that don't always align

- The practical priority: map your AI systems by risk level, assign clear ownership, and document governance decisions before regulators ask

What Is AI Governance and Why 2026 Is a Turning Point

AI governance is the set of policies, processes, roles, and oversight structures that determine how an organization develops, deploys, and monitors AI systems, and how it accounts for the outcomes those systems produce. It is not primarily a technology discipline — it is a risk management function that sits at the intersection of technology risk, board oversight, and enterprise strategy.

That distinction matters because most organizations already have AI in production. The governance question is no longer whether to adopt AI, but whether leadership can actually see what it's doing, who owns the decisions it influences, and what happens when it causes harm.

AI governance typically spans four areas:

- Accountability structures — who owns AI decisions and who answers when they go wrong

- Risk and impact assessment — identifying harm potential before deployment, not after

- Transparency and explainability — whether decisions can be audited and explained to regulators or courts

- Ongoing monitoring — detecting drift, bias, or unexpected behavior in live systems

Why 2026 Is the Inflection Point

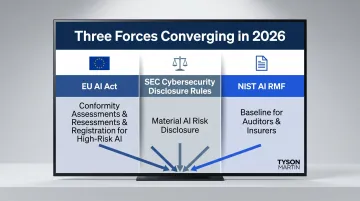

Several regulatory and market forces are converging this year in ways that make AI governance a board-level priority rather than an IT project.

The EU AI Act's obligations for high-risk AI systems began phasing in through 2024 and 2025, with conformity assessments and registration requirements that affect any organization doing business in Europe. In the US, the SEC's cybersecurity disclosure rules — already in force — are increasingly being interpreted to include material AI-related risks. Meanwhile, the NIST AI Risk Management Framework has shifted from voluntary guidance to a baseline that regulators, auditors, and insurers are beginning to reference directly.

For boards and executive teams, 2026 is the year where "we're working on AI governance" stops being an acceptable answer. Regulators, institutional investors, and counterparties want to see documented frameworks, assigned accountability, and evidence of active oversight — not intentions.