Introduction

Boards and executive teams face a fundamental tension: AI now drives decisions with real consequences—credit approvals, workforce scheduling, customer outcomes, pricing algorithms—but most governance frameworks were designed for systems that behaved predictably. Traditional oversight structures assumed static policies, periodic audits, and rules that held constant over time. AI systems learn, adapt, and produce probabilistic outputs that shift as data changes. The gap between how these systems work and how organizations govern them is no longer a technical problem. It's a leadership problem.

When an AI system influences whether a customer gets approved for credit, whether a candidate advances to interview, or whether a patient receives a particular treatment recommendation, the question isn't just "Is the AI accurate?" It's "Who is responsible when the output is wrong, how do we know it happened, and what do we do next?"

Most governance frameworks don't answer those questions clearly. That ambiguity creates exposure—and in regulated industries, it creates liability.

What follows is a plain-English breakdown of AI contextual governance: what it is, why traditional models break down, and what boards and executives need to do differently—without slowing the business down.

TLDR:

- AI contextual governance calibrates oversight based on real-world risk, not uniform checklists

- Traditional governance fails when AI systems learn, drift, and operate probabilistically rather than deterministically

- Adaptive governance runs on three pillars: strategic visibility, clear decision rights, and proportional accountability

- Organizations that clarify who governs what AI systems accelerate innovation while reducing exposure

- Boards must define risk tolerance, approve high-stakes use cases, and set escalation thresholds that hold under real conditions

Why Traditional AI Governance Falls Short

Traditional governance was built for deterministic, rule-bound systems: enterprise resource planning software, payroll processors, static databases. Oversight followed a predictable rhythm — periodic audits, compliance checklists, siloed functions reviewing controls annually or quarterly. Policies were written once and applied uniformly. That model worked when technology behaved consistently and humans made the final call.

AI systems break that model. They learn from data, adapt over time, and produce outputs that are probabilistic rather than fixed. A credit-scoring algorithm won't return the same result for identical inputs if the underlying training data has shifted. A hiring tool won't behave the same way in January as it did in July after incorporating six months of additional feedback.

Model drift — the degradation of performance as relationships between inputs and outputs change — means that AI systems require continuous monitoring throughout their entire lifetime, not snapshot audits.

The One-Size-Fits-All Problem

Applying identical controls to every AI system, regardless of context, creates two failures at once. A low-risk internal scheduling tool receives the same scrutiny as a high-stakes clinical decision support algorithm or a credit approval model — generating unnecessary friction for low-risk applications while leaving consequential systems dangerously under-supervised.

The NIST AI Risk Management Framework explicitly states that "AI risk management is highly contextual"—the level of governance required depends on the specific application, the severity of potential impacts, and the probability of harm. Organizations should scale oversight proportionally. Yet many apply maximum scrutiny everywhere (and stall innovation) or minimum scrutiny everywhere (and create exposure).

Escalation Failure

When an AI system behaves unexpectedly — rejecting qualified applicants, approving high-risk transactions, or producing outputs that don't align with business intent — most governance frameworks offer no defined path forward. Who is responsible? Who has authority to intervene? What happens next?

Without clear escalation thresholds, decision rights stay ambiguous, accountability stays diffuse, and the gap between detection and action widens.

Regulatory Acceleration

The pace of AI-specific regulation has outrun annual policy review cycles entirely. The numbers make the point plainly:

- U.S. state-level AI laws grew from 1 in 2016 to 131 in 2024 — more than doubling from 49 in 2023

- At least 72 countries have proposed over 1,000 AI-related policy initiatives globally

- The EU AI Act imposes penalties up to €35 million or 7% of global turnover for non-compliance

Organizations relying on periodic audits are accumulating governance debt faster than they can retire it.

The gap between governance design and AI behavior is a decision rights problem. Boards that haven't clarified who governs what — at what threshold and under what conditions — are exposed to both regulatory and operational consequences when something goes wrong.

What AI Contextual Governance Means in Practice

AI contextual governance is an approach where oversight rules, controls, and accountability structures adapt based on the real-world context in which an AI system operates—not as a fixed checklist applied uniformly. It means the governance framework responds proportionally to the risk level, regulatory environment, business function, and potential consequence if the decision is wrong.

Key Contextual Signals

Several signals shape how governance is calibrated:

- Business function — internal workflow tool versus customer-facing decision engine

- Data sensitivity — personal health records, financial data, or demographic information carry higher stakes than anonymized operational data

- Scope of impact — a handful of internal users versus millions of customers

- Regulatory environment — whether the use case falls under GDPR, FCRA, HIPAA, or the EU AI Act

- Reversibility of errors — whether a wrong output can be corrected quickly or creates financial harm, legal exposure, or reputational damage

These signals determine whether an AI system receives lightweight monitoring or layered review with human-in-the-loop accountability.

Risk-Tiering in Action

Contextual governance applies different levels of oversight based on risk tier:

- Low-risk automation (e.g., internal workflow scheduling, spam filtering) operates with automated monitoring, minimal documentation, and fast deployment cycles

- High-risk decisions (e.g., credit approvals, employment actions, healthcare diagnostics) require explainability mechanisms, documented decision logic, regular human review, and clear escalation paths when outputs fall outside acceptable thresholds

The EU AI Act structures this explicitly, classifying AI into four risk tiers—unacceptable, high, limited, and minimal—with different regulatory obligations for each. Organizations adopting contextual governance apply this same tiering internally, mapping oversight requirements to the actual consequences of a wrong decision rather than treating all AI systems as equivalent.

Business-Specific Contextual Accuracy

Risk-tiering tells you how much oversight to apply. A separate question is whether the AI is producing the right outputs for your specific environment. Business-specific contextual accuracy means evaluating AI outputs against the operational constraints, stakeholder expectations, and risk tolerance of the organization—not just against benchmark datasets or technical performance measures.

An AI model can be 95% accurate in a lab and still be wrong for your business—if it produces outputs that violate regulatory requirements, conflict with brand values, or ignore domain-specific constraints. Contextual governance asks: "Is this output acceptable given our compliance obligations and the decisions we're making?" That is a different question than "Is this output statistically accurate?"

Contextual Governance vs. Compliance Theater

Contextual governance is not about documenting policies to satisfy auditors. It is about building oversight structures that change AI behavior and create defensible, auditable decisions. Compliance theater fills binders with policies no one follows and populates dashboards no one acts on. When something goes wrong, those artifacts provide no protection. Contextual governance works differently: it defines who owns the decision, what triggers escalation, and what the threshold is for human review—before the incident, not after.

The Three Pillars of AI Contextual Governance

Three structural pillars make contextual governance operable at an organizational level.

Pillar One: Strategic Visibility

Strategic visibility means leaders can see—in near real time—how AI systems are behaving across the organization, where high-stakes decisions are occurring, and whether outputs align with business intent. This requires moving from annual audit snapshots to continuous monitoring dashboards that show trend, not trivia.

What "Trend, Not Trivia" Means:

Most AI governance dashboards surface noise: model version numbers, retraining schedules, feature counts. Strategic visibility focuses on decision-relevant signals:

- Are approval rates shifting unexpectedly?

- Are certain demographic groups receiving systematically different outcomes?

- Has the relationship between inputs and outputs changed (concept drift)?

- Are high-confidence predictions becoming less reliable over time?

Boards need visibility into whether the AI system is behaving as intended and whether that behavior aligns with organizational values and regulatory obligations.

AI Learning Capability in Business Context:

AI learning capability refers to how well an organization—not just the AI model itself—learns from AI-influenced outcomes over time. It means feeding real decision results back into governance to refine thresholds, update risk ratings, and catch model drift before it creates harm.

Organizations with strong learning capability adjust governance rules dynamically as conditions change. They don't wait for annual reviews or regulatory violations to force action.

Pillar Two: Clear Decision Rights

Decision rights define who approves AI deployment, who monitors ongoing behavior, who has authority to intervene, and who communicates with stakeholders when something goes wrong. Without this clarity, accountability is unclear and organizations respond slowly under pressure.

Board vs. Management Accountability:

Decision rights must distinguish between two distinct accountability layers:

Board oversight covers setting risk tolerance, approving high-stakes AI use cases, reviewing governance frameworks during transitions (M&A, new leadership, incident response), and receiving regular trend-based reporting—not just incident alerts.

Management execution covers day-to-day monitoring, threshold adjustments, incident response, retraining decisions, and operational oversight.

According to NACD guidance, boards should ensure AI systems "remain aligned with the company's overall mission, purpose, values, and ethics," while management defines data quality standards, conducts impact assessments, and certifies model safety.

Clear decision rights make strategic visibility actionable — boards can only act on what they see if they know exactly who owns the response.

Pillar Three: Adaptive Accountability

Accountability in AI governance must be adaptive—it should tighten as risk increases and relax proportionally when risk is lower, rather than applying maximum scrutiny everywhere or minimum scrutiny everywhere.

Calibrating Oversight to Risk Level:

- High-impact decisions require defined human-in-the-loop review, explainability documentation, and audit trails

- Low-risk use cases rely on automated monitoring with exception-based escalation

- Clear escalation paths specify when AI behavior moves outside established thresholds and who is notified at each level

Organizations with adaptive accountability keep low-risk innovation moving. They don't add approval layers where none are needed — and they don't strip controls from high-stakes decisions because governance feels burdensome. The practical result: faster deployment where it's safe, tighter oversight where it matters.

How Contextual Governance Drives Business Evolution

Well-designed contextual governance accelerates innovation rather than restricting it. When teams understand clearly what is governed tightly versus what can move quickly, they stop seeking unnecessary approvals for low-risk work and focus oversight energy where it actually matters.

Three Dimensions of Business Evolution:

Contextual governance enables evolution across three dimensions:

- Structural: Decision chains adapt as AI handles routine analysis; roles shift from manual review to oversight and exception management; speed of execution accelerates for low-risk applications

- Cultural: Employees learn to critically oversee AI rather than either blindly trust or resist it; transparent documentation and accountability build internal confidence

- Strategic: Planning becomes more iterative and data-responsive; governance infrastructure becomes a strategic enabler rather than a compliance checkbox

Trust Compounds Over Time:

Organizations that handle AI-influenced decisions transparently and fairly—especially in high-stakes moments—build stakeholder confidence that becomes a durable competitive asset. Research shows that 58% of executives report Responsible AI initiatives improve ROI and organizational efficiency, and 55% report enhanced customer experience. That confidence is earned through consistent, defensible decisions under real conditions — not through messaging.

That trust gap shows up in the numbers. In high-maturity AI organizations, 57% of business units trust and are ready to use new AI solutions, compared to only 14% in low-maturity organizations. High-maturity organizations also keep AI projects operational for 3+ years at a rate of 45%, versus 20% in low-maturity organizations — a gap that reflects governance infrastructure, not just technical capability.

Who Owns AI Governance: Board and Management Accountability

The accountability split between board and management is often left ambiguous, and that ambiguity creates escalation failure during critical moments.

The Board's Role:

- Set risk tolerance for AI use cases across the organization

- Approve high-stakes AI deployments that affect customers, employees, or finances at scale

- Review and approve governance frameworks during significant transitions—M&A, new leadership, post-incident recovery, rapid AI deployment

- Receive regular trend-based reporting on AI system behavior, not just incident alerts

Management's Role:

- Day-to-day oversight and continuous monitoring

- Threshold adjustments based on performance data and drift detection

- Incident response and escalation when AI behavior moves outside acceptable bounds

- Certification of model safety, data quality, and alignment with governance policies

Critical Questions Boards Should Ask:

- What AI systems are making decisions that affect customers, employees, or finances?

- What is our risk tier for each system, and who assigned it?

- Who is monitoring AI behavior continuously, and what triggers escalation to the board level?

- How do we know if an AI system is behaving as intended, and what happens when it's not?

Governance Exposure During Transitions:

Organizations in transition face the highest governance exposure because decision rights are often undefined or inherited without review. As Logicalis notes, "governance rarely fails when people ignore policies; it fails when the organization no longer resembles the structure for which policies were designed."

When accountability gaps appear during a transition, boards need clear answers fast: who owns each AI system's behavior, who can authorize a shutdown, and what the escalation threshold is before a risk becomes an incident. A board advisor or fractional CISO with AI governance experience can define those boundaries before the next transition tests them.

Getting Started: Building an Adaptive AI Governance Framework

Begin with an inventory: map every AI system influencing real decisions—customer outcomes, employee actions, financial approvals—and assign a risk tier to each based on contextual factors.

Risk Classification Criteria:

- Data sensitivity — personal, financial, or regulated data raises the tier

- Regulatory exposure — some use cases trigger industry-specific or jurisdictional AI rules

- Decision impact — wrong outputs that affect customers, employees, or finances demand higher scrutiny

- Reversibility — decisions that create lasting harm require stricter controls than easily corrected ones

Those tiers determine what governance controls apply. A well-structured framework covers four components:

Core Framework Components:

- Risk classification that updates as regulatory and operational conditions shift

- Decision rights assigned to named owners at both board and management level

- Monitoring dashboards tracking behavioral trends, not just technical metrics

- Escalation thresholds that define when human review is mandatory and who is notified at each level

Design for Inspectability:

Every AI-influenced decision should be traceable, explainable, and auditable:

- Document input data, decision logic, and output rationale for each system

- Maintain audit trails capturing who approved deployment, who monitors performance, and who intervened when thresholds were exceeded

- Calibrate explainability to risk level — streamlined for low-risk systems, detailed for high-stakes decisions

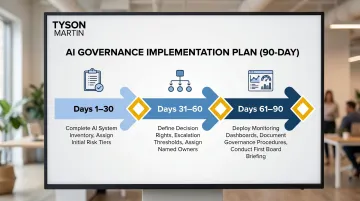

90-Day Plan, Not Multi-Year Project:

Governance frameworks stall when they become open-ended projects. A 90-day phased plan keeps execution on track:

- First 30 days: Complete AI system inventory and assign initial risk tiers

- Days 31-60: Define decision rights and escalation thresholds; assign named owners

- Days 61-90: Deploy monitoring dashboards and document governance procedures; conduct first board briefing

Research shows that 50% of organizations cite operationalization as their biggest hurdle to scaling governance. Time-bounded, phased approaches overcome that barrier.

Frequently Asked Questions

What is business-specific contextual accuracy in AI governance?

Business-specific contextual accuracy means evaluating AI outputs against your organization's operational constraints, stakeholder expectations, and risk tolerancerather than generic benchmarks. A technically accurate AI output can still be the wrong answer if it violates regulatory requirements or conflicts with your organization's values and constraints.

What are the three pillars of AI governance?

The three pillars are strategic visibility, clear decision rights, and adaptive accountability. Strategic visibility means continuous insight into AI behavior; clear decision rights define who owns deployment, monitoring, and escalation; adaptive accountability adjusts oversight intensity based on risk level.

What is AI learning capability in a business context?

AI learning capability refers to how well an organization feeds outcomes from AI-influenced decisions back into its governance process. That means refining thresholds, updating risk models, and catching drift continuously — so governance evolves alongside the AI system rather than only at scheduled reviews.

How does AI contextual governance differ from traditional AI governance?

Traditional governance applies static, uniform rules across all AI systems regardless of risk level or context. Contextual governance applies oversight proportionally based on the use case, risk tier, regulatory environment, and real-time conditions, allowing faster movement on low-risk applications while maintaining rigorous oversight where it matters most.

When should a board get involved in AI governance decisions?

Boards should set AI risk tolerance, approve high-stakes use cases affecting customers, employees, or finances at scale, and review governance frameworks during significant transitions such as M&A, leadership changes, or incident response. Regular trend-based reporting on AI behavior belongs on the board agenda before problems surface, not after.