Introduction

Most boards and executive teams face an uncomfortable truth: their organizations have documented incident response plans sitting on the shelf, yet almost none have tested whether the people in the room can actually execute them under pressure. The World Economic Forum's Global Cybersecurity Outlook 2026 reveals a striking gap—whilst 64% of organizations claim to meet minimum cyber resilience requirements, only 27% actually simulate cyber incidents with their ecosystem partners. Among insufficiently resilient organizations, 37% cite poor incident response planning as a top challenge.

Tools, playbooks, and certifications are table stakes. What separates operationally ready organizations from vulnerable ones is whether leadership has practiced making decisions in a simulated crisis—before a real one forces the issue.

That gap has a measurable cost. IBM's Cost of a Data Breach Report 2024 found organizations with high levels of incident response planning and testing averaged $4.15M per breach versus $5.10M for those with low levels—a $950,000 difference.

This post covers what cyber crisis simulations are, what they expose that traditional training misses, how to design and run one effectively, and what boards and executives should be demanding from their simulation programmes.

TLDR: Key Takeaways

- A cyber crisis simulation puts your response plan and the people responsible for it under realistic pressure to expose gaps before attackers do

- Simulations reveal what actually happens when decision-makers face stress and incomplete information, not what the documentation assumes will happen

- Well-designed simulations test decision rights, escalation thresholds, and cross-functional coordination, not just technical response capability

- The best outcome: a short list of governance fixes, updated authorities, and measurable action items with owners

What Is a Cyber Crisis Simulation?

A cyber crisis simulation is a controlled, scenario-driven exercise in which key stakeholders respond to a realistic cyberattack as if it were happening in real time—without actual systems being compromised. These scenarios typically include ransomware incidents, data breaches, third-party compromises, or business email compromise events tailored to the organization's risk profile.

There are two main formats, each serving distinct purposes:

Tabletop Exercises

Discussion-based and lower intensity, tabletop exercises walk participants through scenario updates at a measured pace. The focus is on communication flow and decision logic rather than speed.

Best for:

- Testing coordination across functions and leadership levels

- Identifying unclear decision authorities before an incident exposes them

- Building shared understanding of escalation paths and roles

Simulation Exercises

Immersive and real-time, simulations put participants under genuine pressure. Scenario injects arrive as events unfold, forcing actual decisions with incomplete information while the clock runs.

Best for:

- Testing whether documented procedures hold under realistic time constraints

- Exposing gaps in stress response and execution capability

- Validating that coordination established in tabletops actually works when stakes feel real

The choice between formats depends on what the organization needs to test and its current maturity level. Tabletops build the foundation; simulations prove whether it holds.

Why Your Playbooks Aren't Enough

Incident response plans and playbooks are written under calm conditions by people who have time to think. A real crisis is the opposite—information is fragmented, pressure is high, roles get confused, and the chain of command either holds or it doesn't. No playbook can account for human behavior under stress.

The Documentation Gap

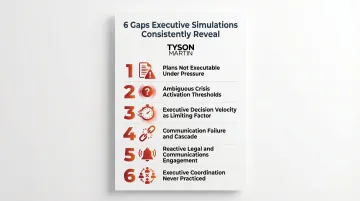

Organizations routinely confuse having a plan with being prepared. The SANS Institute's 2026 analysis of executive cyber exercises identified 10 systemic gaps that simulations consistently reveal:

- Crisis plans are documented but not executable under pressure

- Crisis activation thresholds are ambiguous—no clear authority to declare a crisis

- Executive decision velocity is the actual limiting factor, not technical detection speed

- Communication fails early and cascades—conflicting internal messages, unclear ownership

- Legal and communications teams are engaged reactively, not as integrated response partners

- Executive coordination has never been practiced at scale

These aren't technical failures. They're governance issues: unclear decision rights (who can authorise a system shutdown?), broken escalation paths (who calls the board, and when?), and communication breakdowns between IT, legal, and executive teams.

The Business Case for Testing

The financial impact of this preparation gap is measurable. Organizations with high levels of IR planning and testing averaged $4.15M per breach, compared to $5.10M for those with low levels—representing approximately $950,000 in cost reduction. IR planning and testing reduced average breach costs by $248,106 relative to the global average, making it the most popular area of security investment among surveyed organizations (55%).

The IBM report explicitly recommends organizations "build up their muscle memory for breach responses by participating in cyber range crisis simulation exercises."

Why Boards and Executives Should Care

Regulators, insurers, and shareholders now expect demonstrable proof of preparedness—not just policy documentation. The SEC's cybersecurity disclosure rules, adopted in July 2023, require public companies to disclose material cybersecurity incidents within four business days and to report annually on board oversight of cybersecurity risk in Form 10-K. The EU's Digital Operational Resilience Act (DORA) mandates resilience testing programs for financial entities, with tests required at least yearly for systems supporting critical functions.

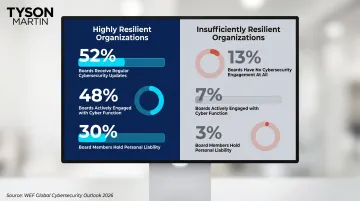

Personal accountability is rising alongside these mandates. The WEF 2026 report found that 30% of highly resilient organizations have board members who hold personal liability for cyber breaches, compared to only 9% in insufficiently resilient organizations.

Simulations are the only training method that tests the full decision chain—from technical response through executive communication to regulatory notification—under realistic time pressure. Certifications and awareness training build individual capability. Only simulations test whether the organization executes as one.

What a Well-Designed Simulation Actually Tests

Decision Rights Under Pressure

The simulation should force participants to confront ambiguous scenarios where authority to act is unclear. Common reveals include:

- Executives who assume someone else has escalation authority

- Security teams lacking pre-approved authority to isolate systems without going up the chain

- Multiple stakeholders believing they control the same decision

This is a governance finding, not a technical one. Decision rights must be documented, communicated, and practiced before a crisis. Negotiating them mid-incident is too late.

Escalation Thresholds and Board Notification

A well-designed scenario tests whether the organization has clear, pre-defined criteria for when the board gets notified, and whether that process holds under pressure. Boards are often notified too late, with incomplete information, framed in technical language that doesn't support decision-making.

WEF 2026 data highlights how wide this gap runs across resilience levels:

- Only 16% of organizations with industrial/OT environments report OT security issues to their boards

- Among highly resilient organizations, 52% of boards receive regular updates and 48% are actively engaged with the cyber function

- Among insufficiently resilient organizations, 13% report their board has no cybersecurity engagement at all

Cross-Functional Coordination

The most consistent failure mode in simulations is breakdown between IT, legal, communications, and executive leadership:

- Legal teams wanting to slow the response to assess liability

- Communications needing guidance from IT that isn't forthcoming

- Executives making statements before legal has cleared them

- Technical teams providing updates that non-technical stakeholders can't interpret

This coordination must be practiced, not assumed. The SANS analysis found that legal and communications teams are typically engaged reactively. Brought in only after technical escalation, they frequently miss regulatory reporting timelines and produce inconsistent external messaging.

Quality of Communication to Decision-Makers

Simulations should include a component where someone must brief executive leadership or the board mid-crisis. This tests whether the CISO can communicate risk and recommended actions in plain language that supports decisions. It's a skill technical training rarely builds.

When communication breaks down under pressure, decision-makers fill the gap with assumptions — and assumptions in a crisis almost always cost more time than the incident itself.

Realistic Scenario Relevance

The scenarios must reflect the organization's actual risk profile, covering the threats specific to its industry, its third-party dependencies, and its regulatory obligations. A generic ransomware scenario is less valuable than one built around the specific systems and business processes that matter most to that organization.

Verizon's 2025 Data Breach Investigations Report found that human involvement remains at approximately 60% of breaches, with credential abuse (22%) and social engineering (17%) as dominant initial access vectors. Scenarios should prioritize human-initiated attack paths: phishing, credential theft, and insider threats — not just technical exploits.

The Three Phases of an Effective Cyber Crisis Simulation

Preparation: Define the Objectives and Design the Scenario

The most important work happens before the exercise. Organizations should define what they are testing—decision rights? Board communication? Regulatory notification process?—and build the scenario to pressure-test exactly that. Avoid the common mistake of designing a scenario that confirms competence rather than genuinely challenging the team.

The scenario should be tailored to real threat intelligence and the organization's actual risk landscape. An interim or fractional CISO with cross-enterprise experience brings two things most internal teams can't replicate: scenarios calibrated to current attacker behavior, and a structure designed to expose governance gaps—not just technical ones.

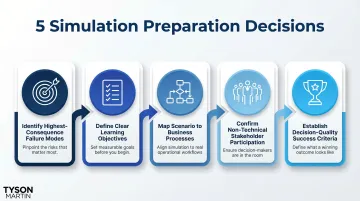

With objectives locked, preparation comes down to five decisions:

- Identify the highest-consequence failure modes specific to your organization

- Define clear learning objectives (not just "test the plan")

- Map scenario events to real business processes and dependencies

- Confirm that non-technical stakeholders will participate actively, not observe

- Establish success criteria based on decision quality, not just speed

Execution: Run the Exercise with Rigor

The mechanics of the exercise include injects (scenario updates) delivered in real time, participants responding in their actual roles, and decisions being made and documented. The goal is realistic pressure, not a rehearsed performance. Participants should be making decisions with incomplete information. That discomfort is the point.

That pressure only holds if the right people are in the room. Legal, communications, and executive leadership must be active participants—not observers. Their decisions—when to notify regulators, how to communicate with customers, whether to engage law enforcement—are as consequential as anything the technical team does.

During execution:

- Deliver injects at realistic intervals (not so fast that participants can't think, not so slow that pressure dissipates)

- Capture decisions, questions, and confusion points in real time

- Let failures happen—don't intervene to "fix" the exercise mid-stream

- Observe communication patterns, decision authority, and coordination breakdowns

After-Action Review: Extract Actionable Governance Findings

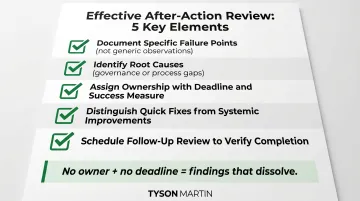

The after-action review is where the exercise pays off. It should produce a clear record of what worked, what failed, and what needs to change—with specific owners and timelines attached. Without that structure, the findings dissolve within weeks and the same gaps resurface in the next incident.

Effective after-action reviews:

- Document specific failure points, not generic observations

- Identify root causes (usually governance or process gaps, not individual mistakes)

- Assign ownership for each finding with a deadline and success measure

- Distinguish between quick fixes and systemic improvements

- Schedule a follow-up review to verify completion

From Exercise to Governance: Turning Findings into Action

The output of a simulation should not sit in a binder. The most common and highest-value findings translate directly into governance improvements:

- Updated decision rights documentation

- Revised escalation thresholds

- Clearer board notification criteria

- Improved cross-functional communication protocols

Each finding should have an owner, a deadline, and a way to verify completion. That accountability structure is what converts simulation findings into actual readiness: the exercise identifies the gap, and the governance work closes it. Organizations should track metrics across simulation cycles to demonstrate improving readiness over time.

Cadence is part of the governance structure, not an afterthought. Organizations should run full simulations at least annually, with targeted tabletop exercises in between, and increase frequency during periods of elevated risk, organizational change, or after a major incident affects a peer organization.

Regulatory expectations are moving in the same direction. The EU's DORA regulation mandates at least yearly testing for ICT systems supporting critical functions — a benchmark that boards in regulated industries should treat as a floor, not a ceiling.

What Boards and Executives Should Demand From Their Simulation Programme

Boards should be asking three direct questions:

- Has a simulation been conducted in the last 12 months?

- What did it find?

- What was fixed as a result?

If there are no clear answers to all three, the organization's cyber readiness is undocumented and likely untested. This is an oversight responsibility, not a technical question. The National Association of Corporate Directors reports that 58% of Fortune 100 companies now disclose the use of tabletop exercises (up from just 3% in 2019), reflecting the rapid shift from optional to baseline governance practice.

Executives should require simulation findings to feed directly into risk reporting — on a regular cadence, not as a one-time compliance checkbox. That reporting tells the board exactly where readiness holds and where it breaks down.

The simulation programme should be treated as a business investment with a measurable return. Organizations with high levels of IR planning and testing see nearly $1M in cost reduction per breach. That figure reflects tangible outcomes:

- Faster breach containment

- Lower incident response costs

- Cleaner regulatory response

- Board-level confidence when a real crisis hits

Frequently Asked Questions

What is a cyber crisis simulation?

A cyber crisis simulation is a controlled, scenario-driven exercise where key stakeholders practice responding to a realistic cyberattack in real time. It's designed to expose gaps in decision-making, communication, and governance before a real incident occurs.

What is cyber attack simulation?

A cyber attack simulation is a technical exercise replicating attacker behaviour against actual or virtual systems, often used by security teams to test defences. A cyber crisis simulation focuses on the human and organizational response—decisions, communication, and governance under pressure.

Where do most cyber incidents begin?

The Verizon 2025 Data Breach Investigations Report found that approximately 60% of breaches involved human elements. Credential abuse (22%) and social engineering (17%) were the leading entry points. This is why crisis simulations must involve non-technical stakeholders, not just the security team.

What is the 80-20 rule in cybersecurity?

The Pareto principle applied to cybersecurity holds that roughly 80% of cyber risk can be reduced by addressing 20% of the most critical vulnerabilities or behaviors — a framing CISA's Secure by Design guidance explicitly references. In simulation design, that means prioritizing the highest-consequence failure modes rather than trying to test everything at once.

How often should an organization run cyber crisis simulations?

At minimum, conduct one full simulation and one to two tabletop exercises per year. Increase frequency during major organizational changes, new regulatory requirements, or significant industry incidents. Most importantly, ensure findings are acted on—cadence matters less than follow-through.